A Better way of Revealing Student Thinking in STEM

As students learn scientific concepts, they often have a mix of scientific and non-scientific ideas that they struggle to integrate. Constructed response questions – in which students must explain phenomena in their own words – provide students an opportunity to demonstrate their proficiency and also can reveal their mixed ideas.

Over years of research and development, the Automated Analysis of Constructed Response (AACR) collaboration has applied computerized analysis techniques, like machine learning, to evaluate student constructed responses. From this research, we've built tools like the Constructed Response Classifier (or CRC tool). These tools are freely accessible at this website, making it possible for you to easily evaluate your students' responses.

Already a user? Log in now

Why Use Constructed Response?

- Requires students to demonstrate proficiency in their own words

- Reveals complex student thinking, which can be a mix of scientific and non-scientific ideas

- Is an authentic, scientific practice

- Constructing is a different cognitive task than forced selection

- Allows rich formative assessment practice

- Our developed items are aligned with foundational concepts in STEM disciplines

Question of the Day

View Full QuestionA species of grape has tendrils. How would biologists explain how a species of grape with tendrils evolved from an ancestral grape species without tendrils?

Register for an account to see more information about this and other questions we have available for you to use.

Why register for an account?

There is no charge to use this website but you must register for an account. We require registration in order to allow instructors to upload and analyze their data.

Registered users have individualized homepages and access to our free CRC tool that automatically score student constructed responses!

Coming Soon! We're working on building a BMC user dashboard to give you quick access to custom tools and information. Visit your dashboard whenever you're ready to jump back into previous reports or get started on setting up new questions. From your dashboard you can perform a variety of actions, like uploading new data, creating a new course, or reviewing previous reports. Follow discussion threads for topics you care about and connect with a large community of BMC users.

How do I get insight into student thinking?

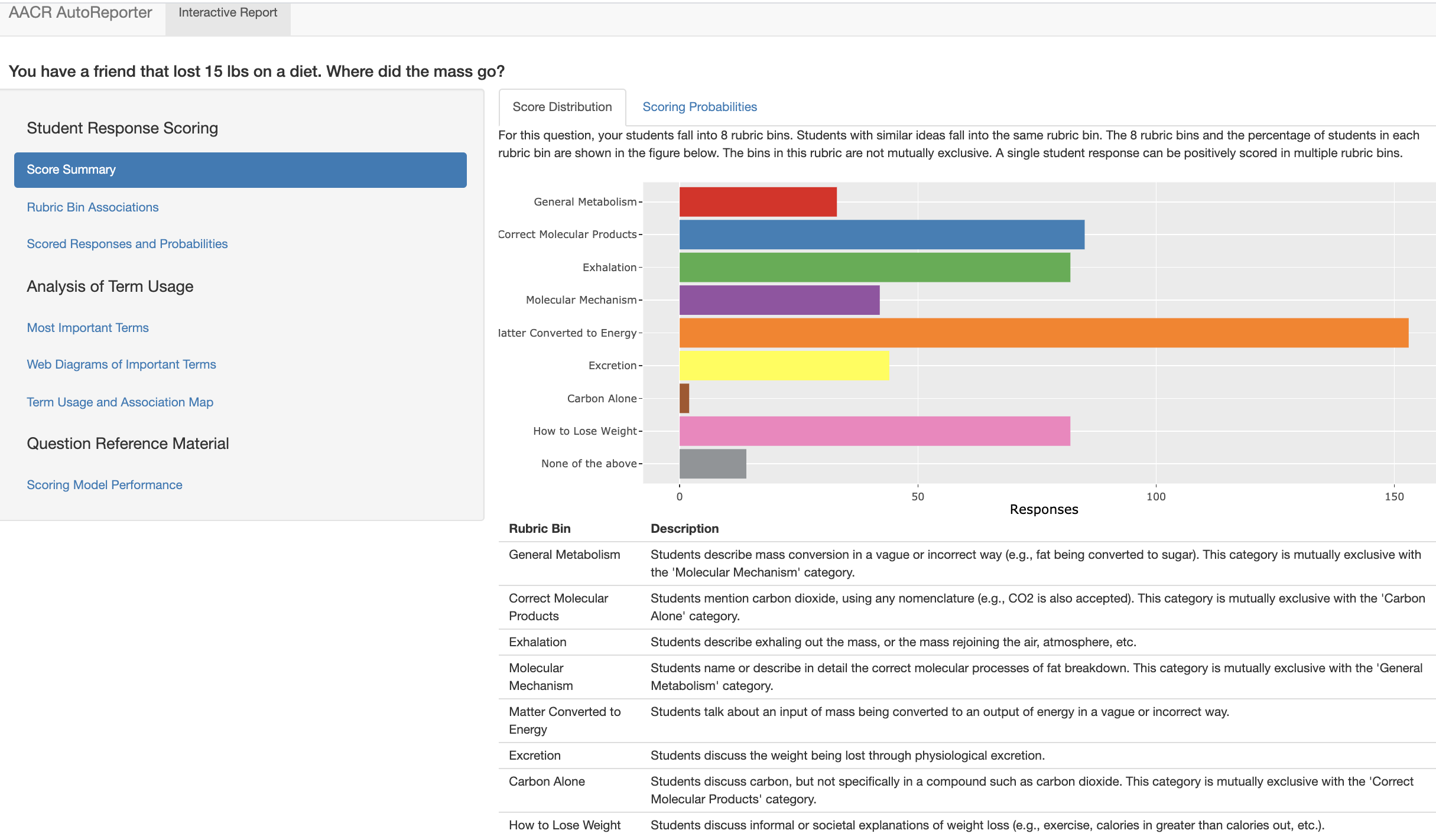

When you use our automated tools to score student responses in your course, results are returned to you as an interactive report. These interactive, course reports are designed to reveal key insights into student learning in STEM. These insights can help educators pinpoint areas of success and areas to target for improvement. Each interactive report provides a high-level class overview of your students' understanding of course material. Explore the complex mix of ideas displayed by your students' and identify key STEM concepts in their writing.

Introductory Biology Professor from Michigan State UniversityThe AACR questions sort of show how [students] are thinking about those concepts a little bit more and how the interaction between concepts and how they interrelate to each other rather than regurgitating facts.

Introductory Biology Professor from Michigan State UniversityI already understand that students struggle with this and this [report] provides me with more input to better understand why they struggle with it

Publications

Announcements

AACR research presented at AERA 2024

Research work from AACR using AI in automated evaluation of formative assessments will be presented at a few sessions at AERA 2024 conference